The web is abuzz this week with talk of the Google Books Ngram Viewer. It’s a great tool, and leads to some very interesting exploration and trend visualization. So does this tool fly in the face of my rant from a few days ago, about how Google’s improvements to search are all automated improvements, with no opportunity for the user to learn and grow?

The first problem is that because (most of) those 550 changes happen while the users are still “asleep”, users don’t actually notice them. Google doesn’t exactly go out of its way to make many of its search improvements visible to the user, and so it’s often difficult to tell whether or not something has happened. As a user, I personally don’t like that approach, because a change that is invisible or purposely hidden is a change that I as a user have no control over, and am not able to change back or alter further. And as I argued in an earlier post, the way to creating passionate search users is not to give them luxury seats without waking them up. Instead, the way to create passionate search users is to give them search tools that give users a path in which they can grow, improve, and get better at searching. Do users get better at flying, or at seeing and comprehending an information landscape from 30,000 feet, if they’ve got luxury chairs? Arguably not. If anything, the luxury chairs make it harder for users to sit upright, to have a “leaning forward”, engaged experience. Users are less inclined, pun intended, to be active participants in the experience. All the decision are being made for them.

On the surface, it would appear that users now have such a tool, a way to explore, compare, and learn. A way to lean forward at the edge of their seats (rather than lean back, asleep, in luxury chairs) and make search work for them, rather than the other way around. However, the problem is that this tool is still not connected back into an actual search. One can visualize trends, but one cannot actually find books that best exemplify these trends.

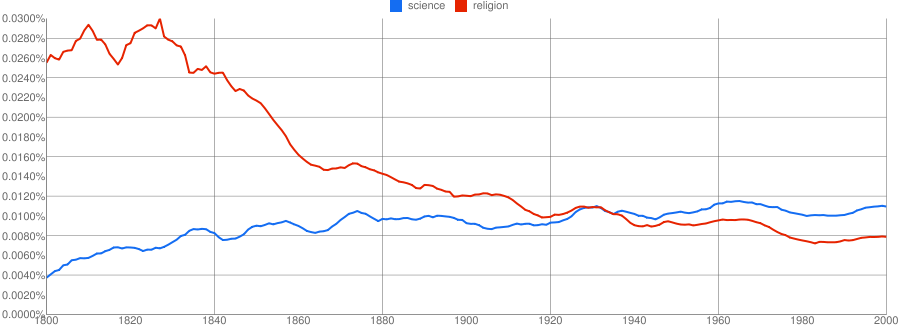

Take for example the Ngram Viewer query [science, religion]. Over the past two centuries, the word religion has decreased, and the word science has increased. Around the 1930s, the two terms cross. Now, as a user, what I would like to be able to do is run an actual Google Books query that looked something like this: [find books that contain both science and religion, and rank ascendingly by absoluteValue(1 – science/religion)]. Put clearly, books in which the number of occurrances of science to religion is about equal should be ranked at the top of the results list. What this does is let me not only visualize the trend, but also find the best (most relevant!) books that exemplify what I think is the most interesting aspects of the trend: the point at which the lines cross.

And to be clear: I don’t just want the list of books from that decade. I want the books from any decade, but in which the relative frequencies of the terms [science] and [religion] are at their closest. The most relevant book might be from 1931. The next most relevant from 1942, and the third most relevant from 1919. I don’t know. But this is more than just selecting books by decade. It is selecting books that best exemplify a pattern than I am seeing in the data.

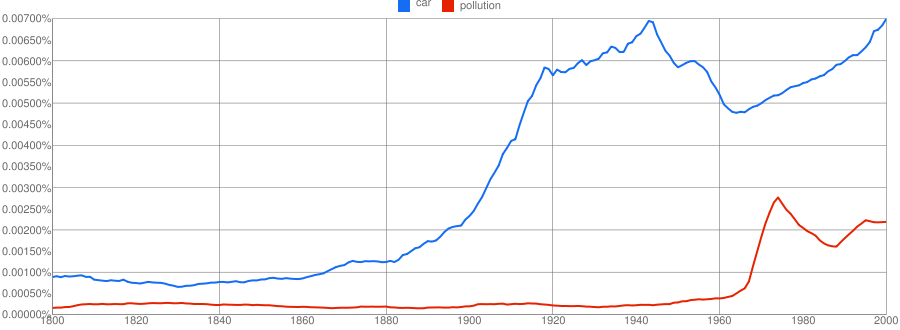

As another example, check out the Ngram Viewer query [car, pollution]. Generally, car seems to diverge from pollution, even though they both trend upwards after 1960. During the mid 1960s to mid 1970s, their divergence narrowed. Something happened then. What was it? Was it the beginnings of the environmental movement, maybe? I don’t know. What I would like to do is be able to see some of the actual books. So again, I want to query Google for Books that contain both car and pollution, and again want to rank by the narrowness of the gap between the two term frequencies — if and only if the book was published after 1920. There is a narrow gap in the 1800s, but only because cars were not as prevalent. So I am not interested in those books; I’m only interested in the modern era books. For a different query, I might prefer the older books, instead. Either way, I should be able to specify it.

And again, this is more than just selecting books by decade. It is about selecting books by the narrowness of the gap. The gap narrows once in the mid 1960s, when the relative frequency of [car] dips. And it narrows again in the mid 1970s when the relative frequency of [pollution] increases. So the most relevant books might be, in order, 1964, 1973, 1966, etc. Not just from any one decade.

This is what I mean by leaning forward, actively engaged users. If that were enabled it would be a great example of exploratory search (see my earlier posts: What you can find out, Universal search is not exploratory search, and “Improving findability” falls short of the mark). But it is not enabled. Not yet.

What Google needs to do is close the loop to give us an exciting form of exploratory search. Book search exists. Book ngram data visualization exists. Now, connect the two and let one select patterns of interest from the visualization and use those patterns as queries into the book collection. Let me, the user, take an active part in determining and creating the query that best exemplifies my information need, the ranking algorithm that best exemplifies what is relevant to me. Don’t just give me a cushier seat by ranking books by their CitationRank or some other universal signal. Let me have a hand in specifying what is interesting, and therefore relevant!

Close the loop.

Another instance of Google’s conservatism (whether you want to view it as “caution” or “lack of innovation”) is Google Scholar (GS). GS is a decent keyword search tool, and it captures first-level links well (who cites whom). But it provides almost no data analysis tools beyond that: no clustering by author; no trends over time; not even the ability to pull out publications for a given year (rather than since a given year). In contrast, Microsoft Academic Search (MSAS) provides much richer functionality, although with much poorer coverage (essentially CS only) and possibly worse basic search (I’ve not compared extensively). But MSAS also illustrates the pitfalls of additional functionality; it’s easy to get things wrong, as they frequently do (confusing authors, misassigning authors to the incorrect institutions, using dubious metric to rank researchers, etc.).

So it sounds like the question boils down to the ol’ precision vs. recall conundrum. To me, there is an easy answer to that question: What is most valuable/most important to the user? If I am doing a patent search, and want to make sure that I don’t miss a single piece of prior art (well, a single piece of prior CS art, but you know what I mean), I am going to use MSAS. I don’t mind if there are a few errors, if it means that my total task time, the total seeking time, is shorter (i.e. I’ll be able to more quickly get full set coverage of all possible related work). On the other hand, if I just need to look up a paper, and know its name or author already, that may be a situation for Google.

What I dislike is not being given the choice. I thought the goal was to organize all the world’s information. Not just all the world’s precision-oriented information. If I *want* to search Google Books for books in which (#occ(science) – #occ(religion)) is as close to zero as possible, there is just no way for me to do it right now. If their OCR engine gets a few words wrong, and therefore gets the counts of science or religion wrong in this or that odd book, that doesn’t bother me. A book that should have been ranked 7th accidentally gets ranked 4th instead. I’m ok with that. I’ll live.